|

by Cade Metz

Cade Metz writes about artificial intelligence

and other emerging technologies...

researchers at Microsoft claims A.I. technology shows the ability to understand the way people do.

Critics say those scientists are kidding themselves...

When computer scientists at Microsoft started to experiment with a new artificial intelligence system last year, they asked it to solve a puzzle that should have required an intuitive understanding of the physical world.

The researchers were startled by the ingenuity of the A.I. system's answer.

The clever suggestion made the researchers wonder whether they were witnessing a new kind of intelligence.

In March, they published a 155-page research paper arguing that the system was a step toward artificial general intelligence, or A.G.I., which is shorthand for a machine that can do anything the human brain can do.

The paper was published on an internet research repository.

Microsoft, the first major tech company to release a paper making such a bold claim, stirred one of the tech world's testiest debates:

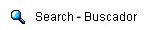

Peter Lee, the head of Microsoft Research, was initially skeptical of what he was seeing the A.I. system create. Credit: Meron Tekie Menghistab for The New York Times

Microsoft's research paper, provocatively called "Sparks of Artificial General Intelligence," goes to the heart of what technologists have been working toward - and fearing - for decades.

Making A.G.I. claims can be a reputation killer for computer scientists.

What one researcher believes is a sign of intelligence can easily be explained away by another, and the debate often sounds more appropriate to a philosophy club than a computer lab.

Last year, Google fired a researcher who claimed that a similar A.I. system was sentient, a step beyond what Microsoft has claimed. A sentient system would not just be intelligent.

It would be able to sense or feel what is happening in the world around it.

But some believe the industry has in the past year or so inched toward something that can't be explained away:

Microsoft has reorganized parts of its research labs to include multiple groups dedicated to exploring the idea.

One will be run by Sébastien Bubeck, who was the lead author on the Microsoft A.G.I. paper.

About five years ago, companies like Google, Microsoft and OpenAI began building large language models, or L.L.M.s. Those systems often spend months analyzing vast amounts of digital text, including books, Wikipedia articles and chat logs.

By pinpointing patterns in that text, they learned to generate,

The technology the Microsoft researchers were working with, OpenAI's GPT-4, is considered the most powerful of those systems.

Microsoft is a close partner of OpenAI and has invested $13 billion in the San Francisco company.

The researchers included Dr. Bubeck, a 38-year-old French expatriate and former Princeton University professor. One of the first things he and his colleagues did was ask GPT-4 to write a mathematical proof showing that there were infinite prime numbers and do it in a way that rhymed.

The technology's poetic proof was so impressive - both mathematically and linguistically - that he found it hard to understand what he was chatting with.

For several months, he and his colleagues documented complex behavior exhibited by the system and believed it demonstrated a "deep and flexible understanding" of human concepts and skills.

When people use GPT-4, they are,

When they asked the system to draw a unicorn using a programming language called TiKZ, it instantly generated a program that could draw a unicorn...

When they removed the stretch of code that drew the unicorn's horn and asked the system to modify the program so that it once again drew a unicorn, it did exactly that.

They asked it to write a program that took in a person's age, sex, weight, height and blood test results and judged whether they were at risk of diabetes.

They asked it to write a letter of support for an electron as a U.S. presidential candidate, in the voice of Mahatma Gandhi, addressed to his wife.

And they asked it to write a Socratic dialogue that explored the misuses and dangers of L.L.M.s...

It did it all in a way that seemed to show an understanding of fields as disparate as politics, physics, history, computer science, medicine and philosophy while combining its knowledge.

Some A.I. experts saw the Microsoft paper as an opportunistic effort to make big claims about a technology that no one quite understood.

Researchers also argue that general intelligence requires a familiarity with the physical world, which GPT-4 in theory does not have.

Sébastien Bubeck was the lead author of Microsoft's paper on the A.G.I. system.

Dr. Bubeck and Dr. Lee said they were unsure how to describe the system's behavior and ultimately settled on "Sparks of A.G.I." because they thought it would capture the imagination of other researchers.

Because Microsoft researchers were testing an early version of GPT-4 that had not been fine-tuned to avoid hate speech, misinformation and other unwanted content, the claims made in the paper cannot be verified by outside experts.

Microsoft says that the system available to the public is not as powerful as the version they tested.

There are times when systems like GPT-4 seem to mimic human reasoning, but there are also times when they seem terribly dense.

"These behaviors are not always consistent," said Ece Kamar, a Microsoft researcher.

Alison Gopnik, a professor of psychology who is part of the A.I. research group at the University of California, Berkeley, said that systems like GPT-4 were no doubt powerful, but it was not clear that the text generated by these systems was the result of something like human reasoning or common sense.

|